Anthropic’s New AI Model “Claude Mythos” Leaked, Revealing a Breakthrough in AI Capabilities

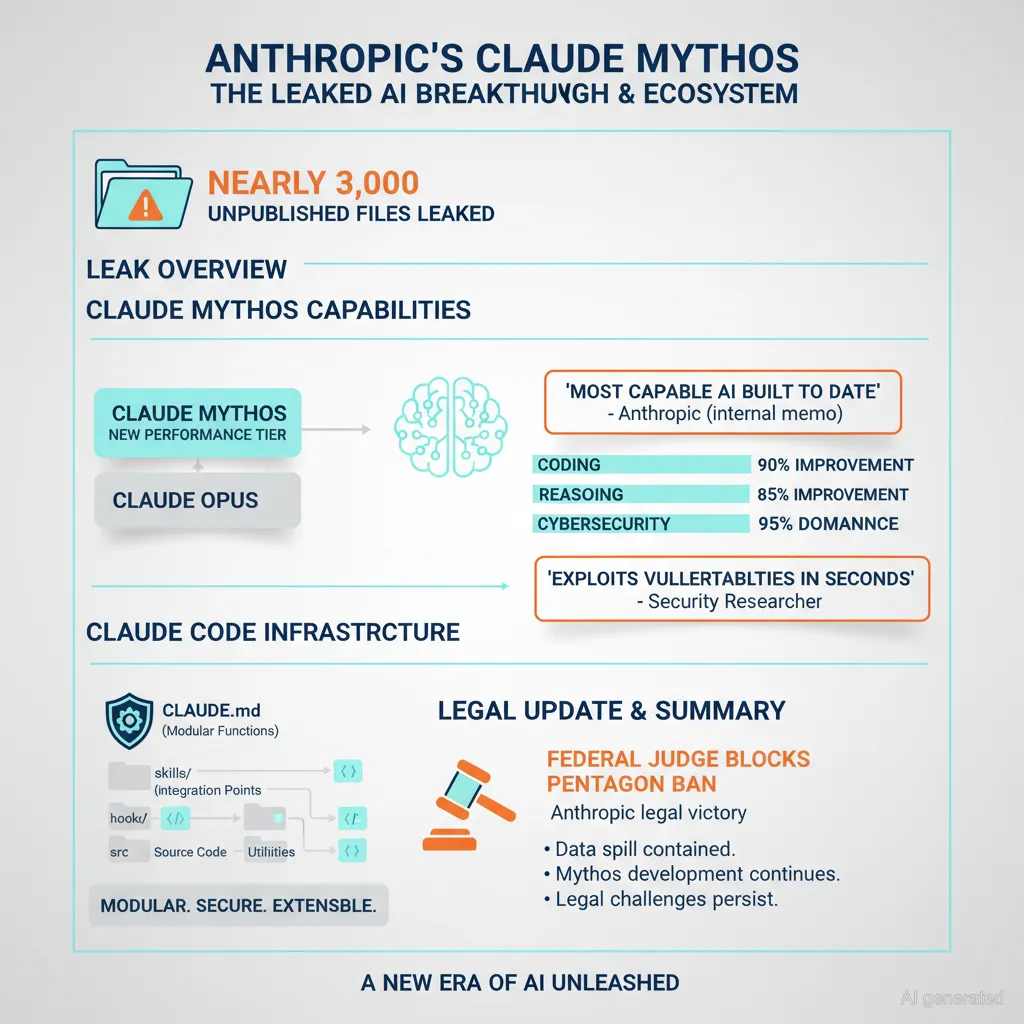

Anthropic recently experienced an accidental public leak of nearly 3,000 unpublished files, including draft blog posts that revealed their newest AI model, codenamed Claude Mythos, also known as Capybara. This model represents a brand new tier of AI, larger and more intelligent than their previous flagship, Opus (Claude Opus 4.6). The leak was discovered by cybersecurity researchers who accessed these files through a publicly accessible data cache triggered by a content management system error.

Anthropic has since confirmed the authenticity of this leak, describing Claude Mythos as “a step change” and “the most capable [AI] we’ve built to date.” The company reports that this model dramatically outperforms their earlier versions in software coding, academic reasoning, and especially cybersecurity proficiency. They emphasize that Mythos is currently far ahead of any other AI model in cyber capabilities, so much so that it raises concerns about security vulnerabilities. Anthropic warned that the capabilities of Mythos could “presage an upcoming wave of models that can exploit vulnerabilities far faster than defenders can react,” underlining the seriousness of its potential impact on cybersecurity.

The testing of Mythos is reportedly underway, with a deliberate slow rollout to a limited group of cybersecurity researchers due to its advanced and potentially risky capabilities. The model is described as part of a series named Capybara, positioned as a new, more powerful generation beyond the Opus models.

Claude Code: Advanced AI Engineering Infrastructure Leveraging Mythos

In addition to the Mythos model, the leak shed light on Anthropic’s evolving software development framework called Claude Code. Unlike traditional prompt-based chatbots, Claude Code is described as a sophisticated AI engineering infrastructure embedded within software repositories. It functions with components such as:

– CLAUDE.md for project memory and instructions,

– skills/ for reusable AI workflows,

– hooks/ for automated code checks and guardrails,

– docs/ for architecture documentation,

– src/ for code modules,

– tools/ for scripts and prompts.

This setup allows the AI to continuously learn from errors, add rules, enforce architectural decisions, refactor code, run workflows, and generate release notes autonomously. Users reportedly only configure this system once, after which it acts like an AI teammate embedded directly into the project repository, significantly enhancing development efficiency and quality.

Additional Context: Legal Developments

On the same day as the leak, a federal judge blocked the Pentagon’s ban on Anthropic, marking a significant legal development for the company amid its growing prominence in AI research and deployment.

Summary

The leak of Anthropic’s next-generation AI model Claude Mythos highlights a major leap in AI performance, specially tailored for complex coding and cybersecurity domains. Its advanced capabilities are so substantial that the company has adopted a cautious approach to its rollout. Complementing this, Anthropic’s Claude Code infrastructure points toward a future where AI operates deeply integrated within software engineering workflows, moving beyond simple prompt-response interactions to become a proactive development partner.

Taken together, these revelations mark a noteworthy milestone in AI development with wide-reaching implications for technology, cybersecurity, and software engineering.